- Blog

- Ffmpeg crop filter variables

- How to export davinci resolve 16 to mp4

- Ahnenblatt translation

- Wireshark capture filter or

- Inkscape svg elekslaser font is hollow

- Whatsapp web ffmpeg dll

- Xmedia recode freeware

- Spotify free hulu

- Stellar repair for photo activation key generator

- How to make spotify blend with friends

- Windows 10 turn off microsoft onedrive on startup

- Sharex screen capture windows 10

- Beyonce on spotify news

- Download daemon tools lite torent

- #Ffmpeg crop filter variables how to#

- #Ffmpeg crop filter variables mac os#

- #Ffmpeg crop filter variables download#

- #Ffmpeg crop filter variables mac#

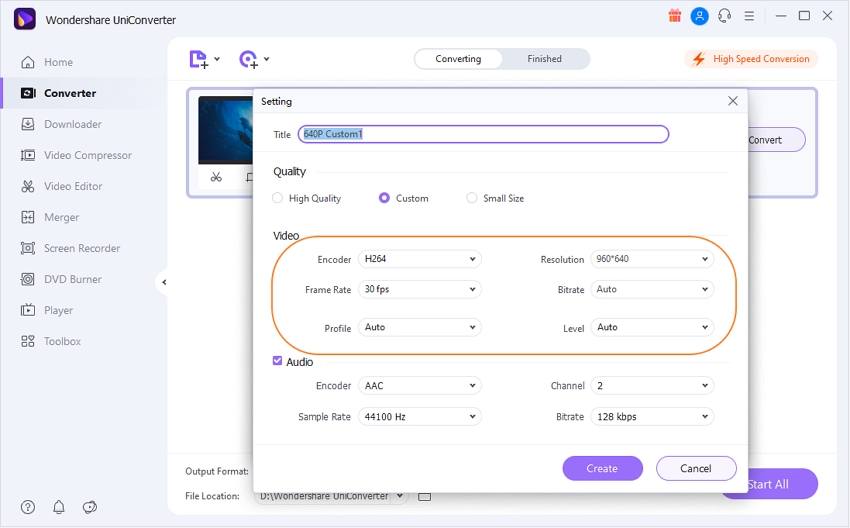

Let’s create a helper function that gets the duration for us: export const getVideoInfo = (inputPath: string) => `])Īs was the case with the previous function, we must first know the length of the video in order to calculate when to extract each frame. ffprobe is a tool that lets us get the metadata of a video, among other things. In order to get the duration of the video, we can use ffprobe, which comes with FFmpeg. For this, of course, we must first get the video duration. In order for the fragment to be a meaningful and representative sample of the video content, it is best if we get it from a point somewhere around 25–75 percent of the total length of the video. The video fragment preview is pretty straightforward to create all we have to do is slice the video at the right moment. I will be using Childish Gambino’s “ This is America” video for testing purposes. Now that we know what tools to use for video manipulation from within Node.js runtime, let’s create the previews in the formats mentioned above. env file: FFMPEG_PATH="D:/ffmpeg/bin/ffmpeg.exe"īoth paths have to be set if they are not already available in our $PATH. ) or by our providing the paths to the executables through the environment variables. The fluent-ffmpeg library depends on the ffmpeg executable being either on our $PATH (so it is callable from the CLI like: ffmpeg.

#Ffmpeg crop filter variables mac#

The installation process is pretty straightforward if you are on Mac or Linux machine. Also, in our examples, there won’t be anything too fancy going on. It also implements many useful features, such as tracking the progress of a command and error handling.Īlthough the commands can get pretty complicated quickly, there’s very good documentation available for the tool. The library, available on npm, generates the FFmpeg commands for us and executes them. under a wide variety of build environments, machine architectures, and configurations.īoasting such an impressive resume, FFmpeg is the perfect choice for video manipulation done from inside of the program, able to run in many different environments.įFmpeg is accessible through CLI, but the framework can be easily controlled through the node-fluent-ffmpeg library.

#Ffmpeg crop filter variables mac os#

It is also highly portable: FFmpeg compiles, runs, and passes our testing infrastructure FATE across Linux, Mac OS X, Microsoft Windows, the BSDs, Solaris, etc. CropDetect, Plugin to detect black bounding box in video and crop it easily. No matter if they were designed by some standards committee, the community or a corporation. YAHR, Basic filter with no variables to remove edge enhancement artifacts. It supports the most obscure ancient formats up to the cutting edge. In the documentation, we read:įFmpeg is the leading multimedia framework, able to decode, encode, transcode, mux, demux, stream, filter and play pretty much anything that humans and machines have created. Manipulating a video with Node.js itself would be extremely hard, so instead we are going to use the most popular video manipulation tool: FFmpeg.

#Ffmpeg crop filter variables how to#

We are going to take a closer look at how to implement both of these approaches. Another popular way of creating a preview is to take a few frames from a video and make a slideshow. YouTube, for instance, plays a 3- to 4-second excerpt from a video whenever users hover over its thumbnail. Generating video previews with Node.js and FFmpegĮvery website that deals with video streaming in any way has a way of showing a short preview of a video without actually playing it. See the FFmpeg and x264 Encoding Guide for additional information on controlling the output quality.Maciej Cieślar Follow A JavaScript developer and a blogger at.

The audio is stream copied instead of being re-encoded. The crop filter can be used to remove it if you prefer. The black background color of the pad filter is visible in the corners. So you have to split the output of any filter if you want to send its output to two places. Split was used because filter graphs must have unique edges, meaning every label connects two "nodes" or filter.

#Ffmpeg crop filter variables download#

You can download the alpha mask from this example. It needs to be the same frame size as your video input, so if video.mkv is 1920x1080, then mask.png also needs to be 1920x1080. You will need to make an image that contains an alpha mask. Either download a Linux build of ffmpeg or follow a step-by-step guide to compile ffmpeg. overlay to place each video on the canvas.transpose/ vflip/ hflip or whatever combination of similar filters you prefer.split to make multiple copies of the alpha component.alphaextract and alphamerge for the alpha component.Example command ffmpeg -i video.mkv -loop 1 -i mask.png -filter_complex \